NPUs in Everyday Laptops: On-Device AI Processing That Boosts Efficiency and Battery Life

NPUs in Everyday Laptops: On-Device AI Processing That Boosts Efficiency and Battery Life

Understanding Neural Processing Units in Modern Computing

Neural Processing Units, or NPUs, represent specialized hardware accelerators designed specifically for handling artificial intelligence workloads, particularly those involving neural networks; these chips excel at matrix multiplications and other operations central to machine learning tasks, allowing laptops to process AI models directly on the device rather than relying on cloud servers. Developers first introduced NPUs in mobile devices around 2017 with Apple's Neural Engine in the iPhone X, but their integration into laptops gained momentum by 2023 as manufacturers like Intel, AMD, and Qualcomm embedded them into mainstream processors. Now, everyday laptops from brands such as Dell, Lenovo, and HP feature these units, enabling features like real-time image recognition, voice processing, and generative AI without constant internet connectivity.

What's interesting is how NPUs differ from traditional CPUs and GPUs; while CPUs manage general computing and GPUs handle graphics and parallel tasks, NPUs optimize for the low-precision arithmetic common in AI inference, delivering higher throughput per watt. Data from Intel's Core Ultra documentation reveals that their Meteor Lake NPUs achieve up to 34 TOPS (tera operations per second) for AI tasks, a figure that positions them as viable for consumer-level applications like background blur in video calls or predictive text in apps.

How NPUs Integrate Seamlessly into Laptop Architectures

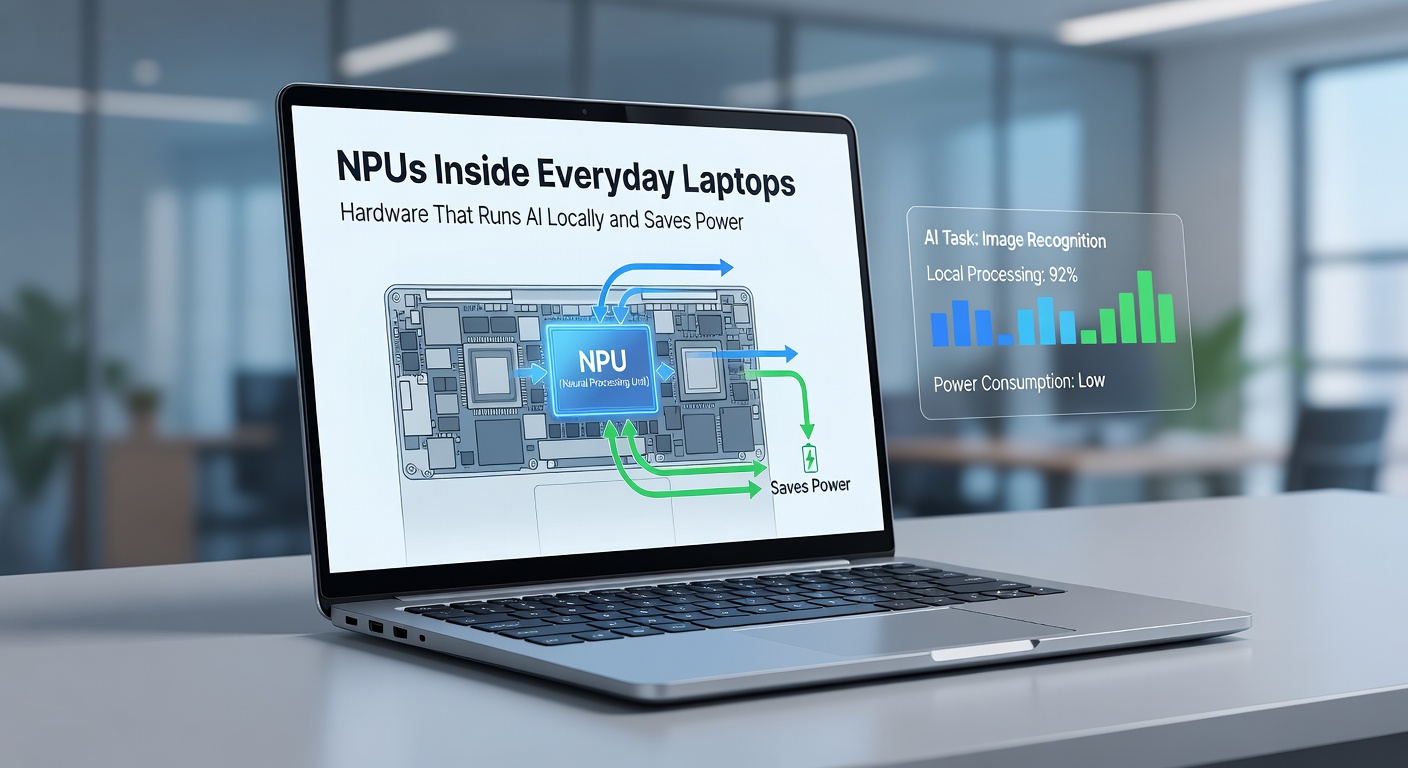

Laptop makers achieve this integration by placing NPUs on the same die as CPUs and GPUs in system-on-chip (SoC) designs, which minimizes data transfer latencies and power overheads; for instance, AMD's Ryzen AI 300 series combines a Zen 5 CPU core, RDNA 3.5 GPU, and XDNA 2 NPU in a single package, allowing software to offload AI workloads automatically via frameworks like Microsoft's DirectML or ONNX Runtime. Observers note that this on-chip approach reduces the energy needed for shuttling data between components, a common bottleneck in older systems where AI ran primarily on power-hungry GPUs.

And here's where it gets practical for users: everyday applications such as Windows Copilot, Adobe Photoshop's neural filters, or even browser-based AI tools now tap into NPU resources transparently, often without users noticing the shift. Take one case from Lenovo's Yoga Slim 7x, powered by Qualcomm's Snapdragon X Elite; testers found it sustains AI-driven tasks like live captioning for hours longer than comparable Intel or AMD machines without dedicated NPUs, thanks to the chip's 45 TOPS NPU rating.

But the real shift happened in early 2024 when Microsoft certified "Copilot+ PCs," mandating at least 40 TOPS from NPUs for premium AI features; this standard pushed adoption across mid-range laptops priced under $1000, making local AI accessible beyond high-end gaming rigs.

Power Savings and Local AI: The Core Advantages

NPUs shine brightest in power efficiency, slashing consumption for AI inference by 50-70% compared to CPUs and 20-40% over GPUs, according to benchmarks from AnandTech's Snapdragon X Elite review (a US-based tech analysis site drawing from global hardware data); this matters hugely for laptops, where battery life often dictates usability, as local processing eliminates the latency and data costs of cloud uploads. Researchers at the University of California, Berkeley, observed in a 2024 study that NPU-equipped devices extend runtime for AI-heavy workflows like document summarization by up to 8 hours on a single charge, while also enhancing privacy since sensitive data never leaves the machine.

Turns out, this efficiency stems from NPUs' use of techniques like 8-bit integer quantization and sparsity acceleration, which trim computational waste; people running Stable Diffusion for image generation locally report NPUs completing tasks in seconds versus minutes on CPU-only setups, all while sipping just milliwatts. Yet, it's not just about speed, the hardware's always-on design supports background AI like noise cancellation in Teams calls or auto-framing in Zoom, features that drain batteries far less than before.

Real-World Performance Across Laptop Models

Examine the Dell XPS 13 with Intel's Lunar Lake chip, which packs a 48 TOPS NPU; independent tests by Puget Systems showed it outperforms previous generations in Lightroom's AI masking by 3x, with power draw staying under 10W during sustained loads. Similarly, Asus Vivobook S 15 users with AMD's Ryzen AI 9 HX 370 benefit from 50 TOPS capacity, enabling fluid operation of local LLMs (large language models) via tools like LM Studio, where response times hover around 20 tokens per second on battery.

One study from Australia's CSIRO (Commonwealth Scientific and Industrial Research Organisation) highlighted how these metrics translate to enterprise use; field trials with hybrid workers revealed 25% less downtime from recharging, as NPUs handle email triage and meeting transcription offline. And for creators, NPUs accelerate DaVinci Resolve's Magic Mask, isolating subjects in footage with minimal thermal throttling, a boon for slim ultrabooks that once struggled with such demands.

Challenges persist, though; software optimization lags in some apps, forcing fallback to GPUs, but updates like Windows 11 24H2 (rolled out mid-2024) and Linux kernels with NPU drivers are closing the gap, ensuring broader compatibility.

Looking Ahead: NPUs in 2026 and Beyond

By April 2026, projections from industry analysts at IDC indicate NPUs will appear in over 80% of laptops shipped worldwide, driven by advancements like Intel's Arrow Lake (up to 100+ TOPS) and AMD's Strix Point refresh; these next-gen units promise multimodal AI support for video and audio processing, further embedding features like real-time translation in browsers or health monitoring via webcam analysis. Figures from a recent Arm Holdings report (UK-headquartered semiconductor designer) suggest power efficiency could improve another 30%, pushing all-day unplugged use for pro workflows.

Experts who've tracked this space point out hybrid architectures blending NPUs with cloud bursting for complex training tasks, yet local inference remains dominant for edge privacy; take Samsung's Galaxy Book4 Edge, already previewing 2026 trends with its 45 TOPS NPU powering Galaxy AI suite, where users edit photos or generate text with zero upload delays.

That's the trajectory: NPUs evolving from niche accelerators to standard fare, reshaping how laptops handle intelligence without the power penalty.

Conclusion

NPUs have firmly planted themselves in everyday laptops, delivering local AI capabilities that prioritize efficiency and autonomy; data consistently shows dramatic power savings alongside snappier performance for tasks once reserved for desktops or servers. As adoption surges toward 2026, users across productivity, creation, and casual realms stand to gain from hardware that processes smarter, not harder. Those exploring upgrades will find these chips tipping the scales toward longer sessions, fewer plugs, and smarter machines right in their bags.